Making Music using Machine Learning: Music Making Machine (M3)

Artash Nath, 13 years. Robots are usually associated with automation, science, and engineering. But can robots have other talents? If you play a melody to a robot, would it be […]

HotPopRobot

HotPopRobot

Space – Science – Robots

Artash Nath, 13 years. Robots are usually associated with automation, science, and engineering. But can robots have other talents? If you play a melody to a robot, would it be […]

Artash Nath, 13 years.

Robots are usually associated with automation, science, and engineering. But can robots have other talents?

If you play a melody to a robot, would it be able to comprehend it and come up with a musical response? Can it learn music, compose its own musical, compete with humans, and even surpass them? Could robots be the next Jonas Brothers, Imagine Dragons, or Mozart?

This was an interesting idea. I have been working on machine learning projects since last year, including on predicting the risk of an asteroid colliding with Earth, robots that detect human emotions, and others. I wanted to see how far I could go to in building an artificially intelligent machine that could learn and play music. The input to the machine would be a melody played by a human on piano. The machine should be able to come with a musical response to that melody. And the response should sound pleasant to the human ear.

I drew inspiration from the Fruit Genie project which is a real-time AI-powered musical instrument which combines Google’s Magenta’s model with a physical interface consisting of fruits. My entire project was made using Google Tensorflow/Python.

My project had 3 parts:

1. Machine Learning Algorithm

2. Training Data Set

3. User Input

Machine Learning Algorithm

I needed an algorithm so that the machine could learn what is music by processing small bits of music to detect and create patterns. Over time it would develop a better understanding of music and be able to create new music or build upon musical melodies created by humans.

The algorithm I found most suitable for this task was a Recurrent Neural Network (RNN). An RNN is a class of artificial neural networks where it takes a sequence, and one by one sifts through its items, all the while remembering what came before it. After training an RNN on multiple such sequences, it will learn the patterns in them and after enough training, be able to build upon a new sequence of the same type.

RNN is different from traditional neural networks. In traditional networks, all the inputs and outputs are independent of each other. An RNN can be imagined as multiple copies of the same network – each passing a message to a successor. The machine then starts to pick up some knowledge from the previous chain, builds it up, and passes it to the next chain. It means some intelligence is retained and moved to the next chain. The process is repeated until a final output is obtained.

The most important feature of the RNN is the Hidden Layers, which remembers some information about a sequence. My RNN had 3 layers with 256 Long-Short-Term-Memory (LSTM) cells in each one.

Long Short-Term Memory (LSTM) is a part of deep learning. They are a type of recurrent neural network capable of learning order dependence in sequence prediction problems. This is a behavior required in complex problem domains like machine translation, and speech recognition.

Training Data Set

To enable the machine to learn, I needed to create a database of human-made music. I did so by bringing together 5000 MIDI melodies of different genres, length, tempo, and beat. Each of the 5000 songs was first broken down into their individual tracks.

Each track, in turn, was split into a list of 48 notes. This was the fundamental architecture on which machine learning would happen.

I trained my Recurrent Neural Network (RNN) on these files. The RNN went through each list of notes several thousand times (epochs) to improve and adapt and become better in responding to user inputs. It learned how to identify the different patterns in music, and what kind of patterns are more melodic and closer to input melodies than others. I was satisfied with training when I was satisfied with how well it responded to melodies of some of the popular nursery rhymes, like “Twinkle, Twinkle Little Star” and “Mary had a Little Lamb”.

Now given a new melody, it can try to predict the notes that would follow and the pattern of those notes that would make the most suitable response.

3. User Input

How to provide musical input to my robot? This was the most exciting step of my project.

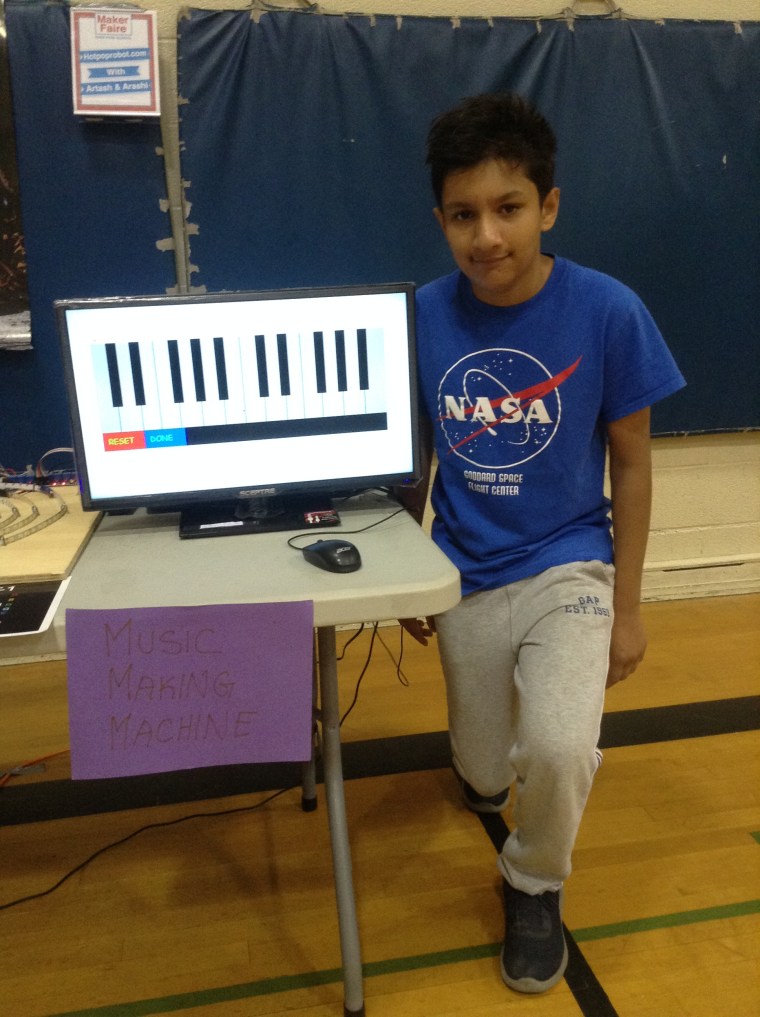

To make it easy for anyone to use my machine, I created a virtual piano (by programming it in Python). It has 2 octaves with a total of 24 keys, ranging from C4 (middle C) to B5.

The users can now simply play the piano and the output of the piano will directly enter the algorithm I have written. Once the user finishes playing the piano, the robot will generate a musical response to it. The duration of the musical response matches the duration of the input provided by the users.

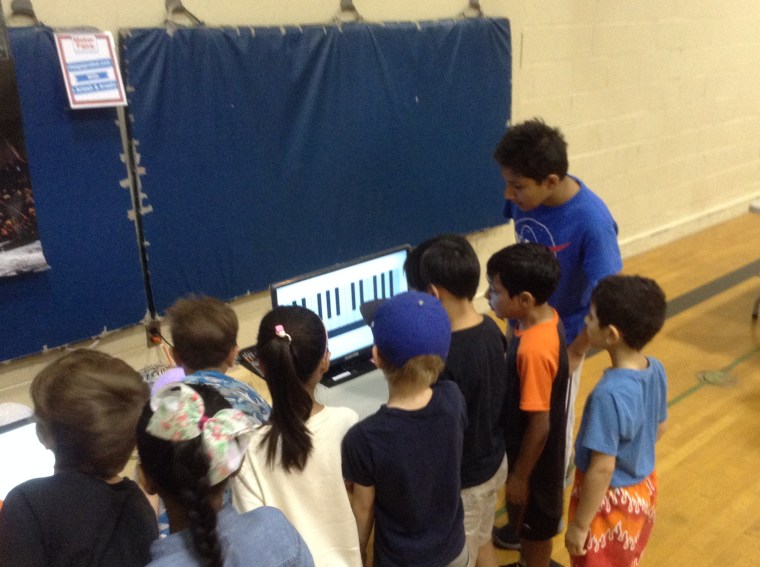

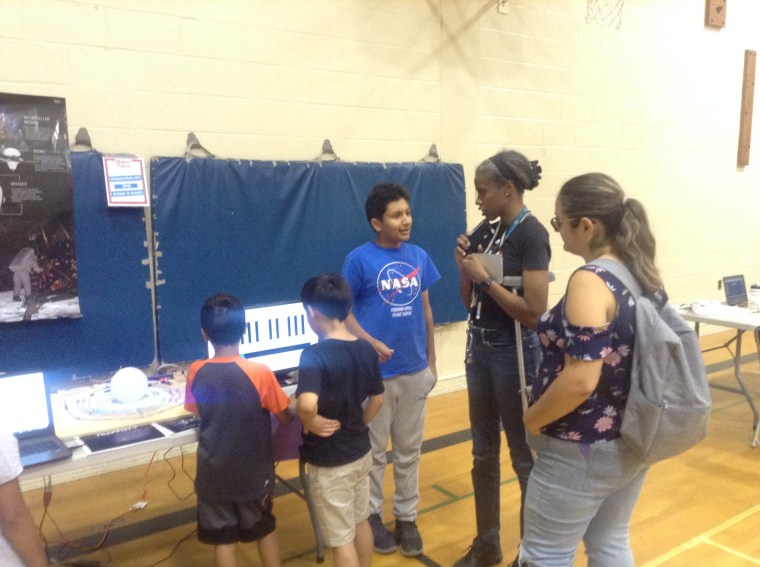

Project Outreach

We tested our robot at several places as a part of our exhibits on machine learning, the moon shot and Apollo 11 celebrations in schools, churches and on streets.

It was wonderful to see how quickly the users were able to understand the project and play with it without any user instructions. A range of musical inputs were given by the users through the virtual piano and the robot delivered an appropriate musical response at each instance.

Future and Applications

This type of algorithm has several applications. For example, it could be trained the paths of planes tracked from a radar instead of music, and then make assumptions of where a plane is most likely to go next. Or even, if trained on the human voice, would be able to respond in the same language when a human asks it a question. It could also be adapted to deciphering sounds of birds and animals, and underwater creatures.

To make it better in the future, I will keep training the model on more songs and fine-tune its training parameters such as the rate that it can learn and the number of patterns that it learns while training.